Introduction

Modern digital systems depend heavily on speed, responsiveness, and real-time data processing. As applications become more complex and connected devices continue increasing worldwide, traditional centralized computing models are facing growing performance limitations.

For many years, cloud computing served as the primary architecture for storing, processing, and managing data. Centralized cloud environments offered scalability, flexibility, and cost efficiency.

However, the rapid growth of technologies such as the Internet of Things, autonomous systems, smart manufacturing, video streaming, online gaming, and artificial intelligence has significantly increased demand for low-latency computing.

In many situations, sending data to distant cloud servers introduces delays that traditional architectures cannot efficiently solve.

This challenge has accelerated the adoption of edge computing.

Edge computing moves data processing closer to the physical location where data is generated. Instead of relying entirely on centralized cloud infrastructure, organizations deploy computing resources near devices, sensors, users, or local networks.

This architectural shift fundamentally changes how businesses design systems, manage workloads, and optimize latency-sensitive applications.

This article explores how edge computing changes latency architecture decisions and why decentralized computing is becoming increasingly important for modern digital infrastructure.

Understanding Latency in Modern Systems

Latency refers to the time required for data to travel between systems and produce a response.

In digital environments, even small delays can significantly affect performance and user experience.

Latency can originate from several factors, including:

- Network distance

- Data congestion

- Server processing time

- Bandwidth limitations

- Routing inefficiencies

- Application architecture

Traditional cloud computing often involves sending data from devices to centralized data centers located far from end users.

While this model works effectively for many applications, latency-sensitive systems may experience unacceptable delays.

For example:

- Autonomous vehicles require near-instant decision-making

- Industrial robots need real-time coordination

- Online gaming depends on fast responsiveness

- Video analytics systems require rapid processing

- Healthcare monitoring systems need immediate alerts

As digital systems become more interactive and real-time dependent, minimizing latency becomes a critical architectural priority.

What Is Edge Computing?

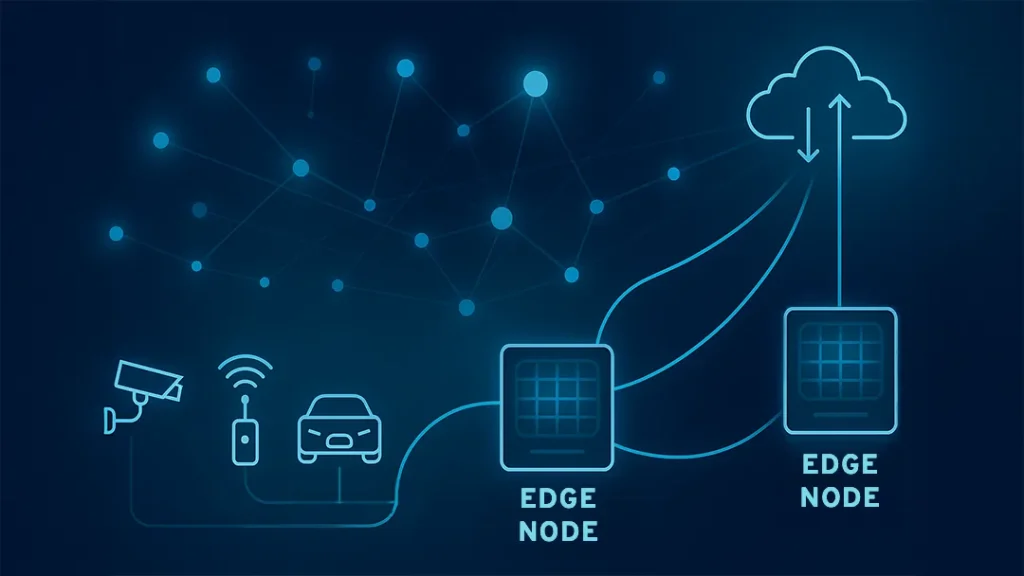

Edge computing is a distributed computing model that places processing power closer to the source of data generation.

Instead of transmitting all information to centralized cloud servers, edge systems process data locally or near the network edge.

Edge infrastructure may include:

- Local servers

- IoT gateways

- Smart devices

- Regional micro data centers

- Telecommunications edge nodes

- Embedded processing units

The primary goal is to reduce the physical and network distance between users, devices, and computing resources.

By processing data closer to the source, edge computing significantly reduces response time and improves operational efficiency.

Why Traditional Cloud Architectures Create Latency Challenges

Centralized cloud systems rely on large data centers that may be geographically distant from users and devices.

As data travels across networks, latency increases.

For applications requiring real-time responsiveness, even milliseconds matter.

Traditional cloud models face several latency-related limitations:

Long-Distance Data Transmission

Data must travel between edge devices and centralized cloud servers.

This increases round-trip communication time.

Network Congestion

Large volumes of traffic can create bottlenecks that slow response times.

Centralized Processing Delays

Cloud systems handling millions of requests simultaneously may experience processing queues.

Dependence on Stable Connectivity

Cloud architectures often rely heavily on continuous internet access.

Connectivity interruptions can reduce reliability.

These limitations become more serious as connected devices generate larger amounts of real-time data.

Edge Computing Reduces Physical Distance

One of the most important ways edge computing improves latency is by reducing physical distance.

The closer processing systems are to data sources, the faster communication becomes.

For example:

- Smart factories can process machine data locally

- Retail stores can analyze customer activity on-site

- Autonomous vehicles can process sensor inputs directly within vehicles

- Smart cities can manage traffic signals through local edge nodes

Reducing distance minimizes network travel time and improves responsiveness.

This architectural principle is central to latency optimization.

Real-Time Decision-Making Becomes Possible

Many modern applications depend on immediate decision-making.

Traditional cloud systems may not respond quickly enough for critical operations.

Edge computing enables near real-time processing by handling data locally.

Industries benefiting from low-latency edge processing include:

- Healthcare

- Manufacturing

- Transportation

- Telecommunications

- Energy management

- Financial services

For example, industrial automation systems may require millisecond-level response times to prevent equipment failures or safety incidents.

Similarly, autonomous vehicles cannot rely entirely on distant cloud servers when making navigation decisions.

Edge computing allows systems to react instantly without waiting for cloud-based processing.

IoT Growth Is Accelerating Edge Adoption

The Internet of Things has become one of the largest drivers of edge computing.

Billions of connected devices continuously generate enormous volumes of data.

Examples include:

- Smart sensors

- Wearable devices

- Industrial equipment

- Security cameras

- Smart home systems

- Environmental monitoring devices

Sending all IoT data to centralized clouds creates significant bandwidth and latency challenges.

Edge computing helps solve this problem by filtering, analyzing, and processing data locally.

Only relevant or summarized information may need transmission to centralized systems.

This reduces bandwidth consumption while improving responsiveness.

Bandwidth Optimization Changes Architecture Priorities

Traditional architectures often assume centralized processing for most workloads.

Edge computing changes this assumption by prioritizing selective data transmission.

Instead of sending every raw data point to the cloud, edge systems may:

- Preprocess information

- Filter unnecessary data

- Compress content

- Trigger local actions

- Send only critical insights centrally

This reduces network congestion and improves system efficiency.

Bandwidth optimization is especially important for applications involving:

- Video streaming

- Surveillance systems

- Industrial sensors

- Remote monitoring

- Smart infrastructure

Organizations must now design architectures that balance local processing with centralized analytics.

Edge Computing Improves Reliability

Latency architecture decisions are not only about speed. Reliability also matters.

Edge computing can improve reliability by reducing dependence on centralized connectivity.

In traditional cloud-only systems, network outages may interrupt functionality.

Edge systems can continue operating locally even when cloud connectivity becomes unstable.

This is especially valuable for:

- Remote industrial operations

- Healthcare environments

- Transportation systems

- Military applications

- Emergency response systems

Local processing improves resilience because critical operations do not always depend on external networks.

This architectural advantage is becoming increasingly important for mission-critical applications.

Distributed Architectures Become More Complex

While edge computing improves latency, it also increases architectural complexity.

Traditional centralized systems are easier to manage because resources are consolidated.

Distributed edge environments introduce new challenges such as:

- Device coordination

- Infrastructure management

- Software deployment

- Security monitoring

- Data synchronization

- Network orchestration

Organizations must now manage computing resources across multiple geographic locations.

This requires advanced automation, monitoring, and operational frameworks.

Latency optimization often involves trade-offs between simplicity and performance.

Security Architecture Must Evolve

Edge computing changes security architecture significantly.

Centralized cloud systems concentrate security controls within large data centers.

Edge environments distribute infrastructure across many endpoints and locations.

This expands the attack surface.

Potential security challenges include:

- Physical device tampering

- Unauthorized access

- Distributed denial-of-service attacks

- Endpoint vulnerabilities

- Data interception risks

Organizations must implement stronger edge security strategies such as:

- Zero-trust frameworks

- Encryption

- Device authentication

- Secure boot systems

- Continuous monitoring

Security decisions now play a major role in edge architecture design.

Data Governance Becomes More Challenging

Edge computing changes how organizations manage data governance.

Traditional cloud systems centralize storage and compliance management.

Edge architectures distribute data processing across many environments.

This creates governance challenges involving:

- Data privacy

- Regulatory compliance

- Data consistency

- Retention policies

- Cross-border data handling

Industries such as healthcare and finance must carefully manage sensitive information processed at edge locations.

Organizations often need hybrid governance models combining centralized oversight with localized processing controls.

Hybrid Cloud and Edge Models Are Emerging

Most organizations do not fully replace cloud infrastructure with edge computing.

Instead, hybrid architectures are becoming more common.

In hybrid environments:

- Edge systems handle latency-sensitive tasks

- Cloud platforms manage large-scale analytics

- Centralized systems support long-term storage

- AI training occurs in cloud environments

- Real-time inference occurs at the edge

This layered architecture allows organizations to balance scalability, latency, and operational efficiency.

Architectural decisions increasingly depend on determining which workloads belong at the edge versus the cloud.

Telecommunications Networks Support Edge Growth

The expansion of 5G networks is accelerating edge computing adoption.

5G offers:

- Lower latency

- Higher bandwidth

- Faster data transfer

- Improved device connectivity

Telecommunications providers are deploying edge infrastructure closer to users through regional data centers and edge nodes.

This enables faster processing for applications such as:

- Augmented reality

- Virtual reality

- Smart transportation

- Autonomous systems

- Real-time analytics

The combination of edge computing and 5G is reshaping digital infrastructure strategies worldwide.

Artificial Intelligence Depends on Low Latency

Artificial intelligence applications increasingly rely on edge computing.

AI systems often require immediate processing for tasks such as:

- Facial recognition

- Object detection

- Predictive maintenance

- Autonomous navigation

- Voice recognition

Sending AI workloads to centralized clouds may introduce delays unsuitable for real-time operations.

Edge AI allows models to process data locally with minimal latency.

This improves speed, privacy, and responsiveness.

Organizations are increasingly deploying lightweight AI models directly on edge devices.

Cost Considerations Influence Architecture Decisions

Edge computing can reduce bandwidth and cloud processing costs.

However, deploying distributed infrastructure also creates new expenses.

Organizations must consider:

- Hardware deployment

- Maintenance costs

- Monitoring systems

- Edge orchestration tools

- Security management

- Energy consumption

Architectural decisions often involve balancing performance improvements against operational complexity and infrastructure investment.

Not every application requires edge processing.

Businesses must evaluate whether low-latency requirements justify distributed deployment costs.

The Future of Latency-Centered Architecture

As digital systems become increasingly interactive, latency will continue shaping infrastructure design.

Future architectures will likely emphasize:

- Distributed processing

- Real-time analytics

- Local AI inference

- Autonomous operations

- Intelligent network routing

- Adaptive edge orchestration

Organizations are moving away from purely centralized systems toward flexible architectures optimized for responsiveness.

Edge computing represents a major evolution in how digital infrastructure is designed and managed.

The future of enterprise technology will likely depend on combining edge, cloud, AI, and telecommunications systems into highly coordinated ecosystems.

Conclusion

Edge computing is fundamentally changing how organizations approach latency architecture decisions.

By moving processing closer to users and devices, edge systems reduce response times, improve reliability, optimize bandwidth usage, and support real-time decision-making.

This shift is becoming increasingly important as technologies such as IoT, artificial intelligence, autonomous systems, and 5G networks continue expanding.

Traditional centralized cloud architectures remain valuable, but they are no longer sufficient for every workload.

Modern digital infrastructure increasingly requires hybrid approaches that combine centralized scalability with localized responsiveness.

While edge computing introduces operational complexity, security challenges, and governance concerns, its performance advantages are driving rapid adoption across industries.

Organizations that successfully balance edge and cloud architectures will likely gain stronger scalability, faster responsiveness, and improved user experiences in an increasingly connected world.

Frequently Asked Questions

1. What is edge computing?

Edge computing is a distributed computing approach that processes data closer to where it is generated instead of relying entirely on centralized cloud servers.

2. Why is low latency important?

Low latency improves responsiveness, user experience, and real-time decision-making in applications such as autonomous systems, gaming, healthcare, and industrial automation.

3. How does edge computing reduce latency?

Edge computing reduces latency by minimizing the distance data must travel between devices and processing systems.

4. What industries benefit most from edge computing?

Industries such as healthcare, manufacturing, transportation, telecommunications, retail, and energy management benefit significantly from edge computing.

5. Does edge computing replace cloud computing?

No, most organizations use hybrid architectures where edge computing handles real-time processing while cloud systems manage large-scale analytics and storage.

6. How does 5G support edge computing?

5G networks provide faster connectivity, lower latency, and higher bandwidth, making edge computing more efficient and scalable.

7. What are the main challenges of edge computing?

Major challenges include distributed infrastructure management, security risks, governance complexity, synchronization issues, and increased operational overhead.